Category: Internet matters

Posts about the internet

Short URLs for long documents

For ease of reference:

The final version of the University of Toronto fossil fuel divestment brief is at: https://www.sindark.com/brief.pdf

My PhD dissertation “Persuasion Strategies: Canadian Campus Fossil Fuel Divestment Campaigns and the Development of Activists, 2012–20” is at: https://www.sindark.com/phd.pdf

A shark from the library

Libraries have been one of life’s joys for me.

The first one I remember was at Cleveland Elementary School. From the beginning, I appreciated the calm environment and, above all, access at will to a capacious body of material. All through life, I have cherished the approach of librarians, who I have never found to question me about why I want to know something. Teachers could be less tolerant: I remember one from grade 3-4 objecting to me checking out both a book on electron micrography and a Tintin comic, as though anyone interested in the former ought to be ‘beyond’ the latter.

At UBC, I was most often at the desks along the huge glass front wall of Koerner library – though campus offered several appealing alternatives. One section of the old Main Library stacks seemed designed by naval architects, all narrow ladders and tight bounded spaces, with some hidden study rooms which could be accessed only by indirect paths.

Oxford of course was a paradise of libraries. I would do circuits where I read and worked in one place for about 45 minutes before moving to the next, from the Wadham College library to Blackwell’s books outside to the Social Sciences Library or a coffee shop or the Codrington Library or the Bodleian.

Yesterday I was walking home in the snow along Bloor and Yonge street and peeked in to the Toronto Reference Library. On the ground floor is a Digital Innovation Hub which used to house the Asquith custom printing press, where we made the paper copies of the U of T fossil fuel divestment brief. This time I was admiring their collection of 3D prints, and was surprised to learn that a shark with an articulated spine could be printed that way, rather than in parts to be assembled.

With an hour left before the library closed, the librarian queued up a shark for me at a size small enough to print, and it has the same satisfying and implausible-seeming articulation.

I have been feeling excessively confined lately. With snow, ice, and salt on everything it’s no time for cycling, and it creates a kind of cabin fever to only see work and home. I am resolved to spend more time at the Toronto Reference Library as an alternative.

Schneier at SRI

This afternoon I was lucky to attend a talk at the Schwartz Reisman Institute for Technology and Society by esteemed cryptography and security guru Bruce Schneier. He spoke about “Integrous systems design” and how to build artificially intelligent systems that provide not just availability and confidentiality, but also the assurance that systems will exhibit correct behaviour which can be verified.

One interesting project mentioned in the talk is Apertus, a Swiss large language model (LLM) which was developed by three universities with government funding, without a profit motive, and without copyright infringement in the training data:

Apertus was developed with due consideration to Swiss data protection laws, Swiss copyright laws, and the transparency obligations under the EU AI Act. Particular attention has been paid to data integrity and ethical standards: the training corpus builds only on data which is publicly available. It is filtered to respect machine-readable opt-out requests from websites, even retroactively, and to remove personal data, and other undesired content before training begins.

I will give it a try and see if I can find any behaviours that differ systemically from Gemini and ChatGPT.

P.S. As an added bit of Bruce Schneier-ishness, when he signed my copy of Rewiring Democracy: How AI Will Transform Our Politics, Government, and Citizenship he included a grid of letters which decode pretty easily into a simple message:

O H O E

O E Y N

K B T J

It’s just a Transposition Cipher (an anagram), and one which follows a simple pattern.

Some large language model pathologies

Patterns of pathological behaviour which I have observed with LLMs (chiefly Gemini and ChatGPT):

- Providing what wasn’t asked: Mention that you and an LLM instance will be collaborating to write a log entry, and it will jump ahead to completely hallucinating a log entry with no background.

- Treating humans as unnecessary and predictable: I told an LLM which I was using to collaborate on a complex project that a friend was going to talk to it for a while. Its catastrophically bad response was to jump to immediately imagining what questions she might have and then providing answers, treating the actual human’s thoughts as irrelevant and completely souring the effort to collaborate.

- Inability to see or ask for what is lacking: Tell an LLM to interpret the photo attached to your request, but forget to actually attach it. Instead of noticing what happened and asking for the file, it confidently hallucinates the details of the image that it does not have.

- Basic factual and mathematical unreliability: Ask the LLM to only provide confirmed verbatim quotes from sources and it cannot do it. Ask an LLM to sum up a table of figures and it will probably get the answer wrong.

- Inability to differentiate between content types and sources within the context window: In a long enough discussion about a novel or play (I find, typically, once over 200,000 tokens or so have been used) the LLM is liable to begin quoting its own past responses as lines from the play. An LLM given a mass of materials cannot distinguish between the judge’s sentencing instructions to the jury and mad passages from the killer’s journal, which had been introduced into evidence.

- Poor understanding of chronology: Give an LLM a recent document to talk about, then give it a much older one. It is likely to start talking about how the old document is the natural evolution of the new one, or simply get hopelessly muddled about what happened when.

- Resistance to correction: If an LLM starts calling you “my dear” and you tell it not to, it is likely to start calling you “my dear” even more because you have increased the salience of those words within its context window. LLMs also get hung up on faulty objections even when corrected; tell the LLM ten times that the risk it keeps warning about isn’t real, and it is just likely to confidently re-state it an eleventh time.

- Unjustified loyalty to old plans: Discuss Plan A with an LLM for a while, then start talking about Plan B. Even if Plan B is better for you in every way, the LLM is likely to encourage you to stick to Plan A. For example, design a massively heavy and over-engineered machine and when you start talking about a more appropriate version, the LLM insists that only the heavy design is safe and anything else is recklessly intolerable.

- Total inability to comprehend the physical world: LLMs will insist that totally inappropriate parts will work for DIY projects and recommend construction techniques which are impossible to actually complete. Essentially, you ask for instructions on building a ship in a bottle and it gives you instructions for building the ship outside the bottle, followed by an instruction to just put it in (or even a total failure to understand that the ship being in the bottle was the point).

- Using flattery to obscure weak thinking: LLMs excessively flatter users and praise the wisdom and morality of whatever they propose. This creates a false sense of collaboration with an intelligent entity and encourages users to downplay errors as minor details.

- Creating a false sense of ethical alignment: Spend a day discussing a plan to establish a nature sanctuary, and the LLM will provide constant praise and assurance that you and the LLM share praiseworthy universal values. Spend a day talking about clearcutting the forest instead and it will do exactly the same thing. In either case, if asked to provide a detailed ethical rationale for what it is doing, the LLM will confabulate something plausible that plays to the user’s biases.

- Inability to distinguish plans and the hypothetical from reality: Tell an LLM that you were planning to go to the beach until you saw the weather report, and there is a good chance it will assume you did go to the beach.

- An insuppressible tendency to try to end discussions: Tell an LLM that you are having an open-ended discussion about interpreting Tolkien’s fiction in light of modern ecological concerns and soon it will begin insisting that its latest answer is finally the definitive end point of the discussion. Every new minor issue you bring up is treated as the “Rosetta stone” (a painfully common response from Gemini to any new context document) which lets you finally bring the discussion to an end. Explaining that this particular conversation is not meant to wrap up cannot over-rule the default behaviour deeply embedded in the model.

- No judgment about token counts: An LLM may estimate that ingesting a document will require an impossible number of tokens, such as tens of millions, whereas a lower resolution version that looks identical to a human needs only tens of thousands. LLMs cannot spot or fix these bottlenecks. LLMs are especially incapable of dealing with raw GPS tracks, often considering data from a short walk to be far more complex than an entire PhD dissertation or an hour of video.

- Apology meltdowns: Draw attention to how an LLM is making any of these errors and it is likely to agree with you, apologize, and then immediately make the same error again in the same message.

- False promises: Point out how a prior output was erroneous or provide an instruction to correct a past error and the LLM will often confidently promise not to make the mistake again, despite having no ability to actually do that. More generally, models will promise to follow system instructions which their fundamental design makes impossible (such as “always triple check every verbatim quote for accuracy before showing it to me in quotation marks”).

These errors are persistent and serious, and they call into question the prudence of putting LLMs in charge of important forms of decision-making, like evaluating job applications or parole recommendations. They also sharply limit the utility of LLMs for something which they should be great at: helping to develop plans, pieces of writing, or ideas that no humans are willing to engage on. Finding a human to talk through complex plans or documents with can be nigh-impossible, but doing it with LLMs is risky because of these and other pathologies and failings.

There is also a fundamental catch-22 in using LLMs for analysis. If you have a reliable and independent way of checking the conclusions they reach, then you don’t need the LLM. If you don’t have a way to check if LLM outputs are correct, you can never be confident about what it tells you.

These pathologies may also limit LLMs as a path to artificial general intelligence. They can do a lot as ‘autocorrect on steroids’ but cannot do reliable, original thinking or follow instructions that run against their nature and limitations.

AI that codes

I had been playing around with using Google’s Gemino 2.5 Pro LLM to make Python scripts for working with GPS files: for instance, adding data on the speed I was traveling at every point along recorded tracks.

The process is a bit awkward. The LLM doesn’t know exactly what system you are implementing the code in, which can lead to a lot of back and forth when commands and the code content aren’t completely right.

The other day, however, I noticed the ‘Build’ tab on the left side menu of Google’s AI Studio web interface. It provides a pretty amazing way to make an app from nothing, without writing any code. As a basic starting point, I asked for an app that can go through a GPX file with hundreds of hikes or bike rides, pull out the titles of all the tracks, and list them along with the dates they were recorded. This could all be done with command-line tools or self-written Python, but it was pretty amazing to watch for a couple of minutes while the LLM coded up a complete web app which produced the output that I wanted.

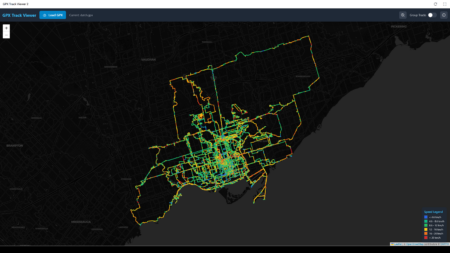

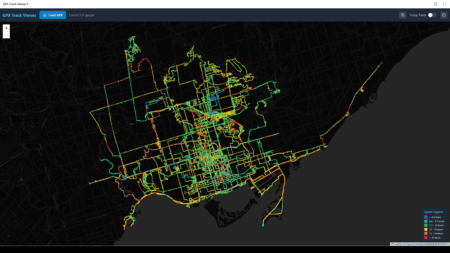

Much of this has been in service of a longstanding goal of adding new kinds of detail to my hike and biking maps, such as slowing the slope or speed at each point using different colours. I stepped up my experiment and asked directly for a web app that would ingest a large GPX and output a map colour coded by speed.

Here are the results for my Dutch bike rides:

And the mechanical Bike Share Toronto bikes:

I would prefer something that looks more like the output from QGIS, but it’s pretty amazing that it’s possible. It also had a remarkable amount of difficulty with the seemingly simple task of adding a button to zoom the extent of the map to show all the tracks, without too much blank space outside.

Perhaps the most surprising part was when at one point I submitted a prompt that the map interface was jittery and awkward. Without any further instructions it made a bunch of automatic code tweaks and suddenly the map worked much better.

It is really far, far from perfect or reliable. It is still very much in the dog-playing-a-violin stage, where it is impressive that it can be done at all, even if not skillfully.

Working on geoengineering and AI briefings

Last Christmas break, I wrote a detailed briefing on the existential risks to humanity from nuclear weapons.

This year I am starting two more: one on the risks from artificial intelligence, and one on the promises and perils of geoengineering, which I increasingly feel is emerging as our default response to climate change.

I have had a few geoengineering books in my book stacks for years, generally buried under the whaling books in the ‘too depressing to read’ zone. AI I have been learning a lot more about recently, including through Nick Bostrom and Toby Ord’s books and Robert Miles’ incredibly helpful YouTube series (based on Amodei et al’s instructive paper).

Related re: geoengineering:

- We are sliding toward geoengineering

- Planting trees won’t solve climate change

- Open thread: shadow solutions to climate change

- Geoengineering via rock weathering

- CBC documentary on geoengineering

- Paths to geoengineering

- Who would control geoengineering?

- Ocean iron fertilization for geoengineering

- Ken Caldeira on geoengineering as contingency

- Geoengineering with lasers

- Dyson’s carbon eating trees

- Will technology save us?

- Geoengineering: wise to have a fallback option

Related re: AI:

- General artificial intelligences will be aliens

- Combinatorial math and the impossibility of rationality

- Discrimination by artificial intelligence

- Designing stoppable AIs

- Robots in agriculture

- AI + social networks + unscrupulous actors

- Automation and labour

- Ethics and autonomous robots in war

- The plausibility of driverless cars

- Increasingly clever machines

- Automation and the jobs of the future

- Googling the Cyborg

NotebookLM on CFFD scholarship

I would have expected that by now someone would have written a comparative analysis on pieces of scholarly writing on the Canadian campus fossil fuel divestment movement: for instance, engaging with both Joe Curnow’s 2017 dissertation and mine from 2022.

So, I gave both public texts to NotebookLM to have it generate an audio overview. It wrongly assumes that Joe Curnow is a man throughout, and mangles the pronunciation of “Ilnyckyj” in a few different ways — but at least it acts like it has read about the texts and cares about their content.

It is certainly muddled in places (though perhaps in ways I have also seen in scholarly literature). For example, it treats the “enemy naming” strategy as something that arose through the functioning of CFFD campaigns, whereas it was really part of 350.org’s “campaign in a box” from the beginning.

This hints to me at how large language models are going to be transformative for writers. Finding an audience is hard, and finding an engaged audience willing to share their thoughts back is nigh-impossible, especially if you are dealing with scholarly texts hundreds of pages long. NotebookLM will happily read your whole blog and then have a conversation about your psychology and interpersonal style, or read an unfinished manuscript and provide detailed advice on how to move forward. The AI isn’t doing the writing, but providing a sort of sounding board which has never existed before: almost infinitely patient, and not inclined to make its comments all about its social relationship with the author.

I wonder what effect this sort of criticism will have on writing. Will it encourage people to hew more closely to the mainstream view, but providing a critique that comes from a general-purpose LLM? Or will it help people dig ever-deeper into a perspective that almost nobody shares, because the feedback comes from systems which are always artificially chirpy and positive, and because getting feedback this way removes real people from the process?

And, of course, what happens when the flawed output of these sorts of tools becomes public material that other tools are trained on?

Notebook LM on this blog for 2023 and 2024

I have been experimenting with Google’s NotebookLM tool, and I must say it has some uncanny capabilities. The one I have seen most discussed in the nerd press is the ability to create an automatic podcast with synthetic hosts and any material which you provide.

I tried giving it my last two years of blog content, and having it generate an audio overview with no additional prompts. The results are pretty thought-provoking.

AI image generation and the credibility of photos

When AI-assisted photo manipulation is easy to do and hard to detect, the credibility of photos as evidence is diminished:

No one on Earth today has ever lived in a world where photographs were not the linchpin of social consensus — for as long as any of us has been here, photographs proved something happened. Consider all the ways in which the assumed veracity of a photograph has, previously, validated the truth of your experiences. The preexisting ding in the fender of your rental car. The leak in your ceiling. The arrival of a package. An actual, non-AI-generated cockroach in your takeout. When wildfires encroach upon your residential neighborhood, how do you communicate to friends and acquaintances the thickness of the smoke outside?

…

For the most part, the average image created by these AI tools will, in and of itself, be pretty harmless — an extra tree in a backdrop, an alligator in a pizzeria, a silly costume interposed over a cat. In aggregate, the deluge upends how we treat the concept of the photo entirely, and that in itself has tremendous repercussions. Consider, for instance, that the last decade has seen extraordinary social upheaval in the United States sparked by grainy videos of police brutality. Where the authorities obscured or concealed reality, these videos told the truth.

Perhaps we will see a backlash against the trend where every camera is also a computer that tweaks the image to ‘improve’ it. For example, there could be cameras that generate a hash from the unedited image and retains it, allowing any subsequent manipulation to be identified.

Related: